Publications

(NeurIPS workshop 2019) Reinforcement learning with a network of spiking agents [Paper]

Sneha Aenugu, Abhishek Sharma, Sasi Kiran Yelamarthi, Hananel Hazan, Philip.S.Thomas, Robert Kozma

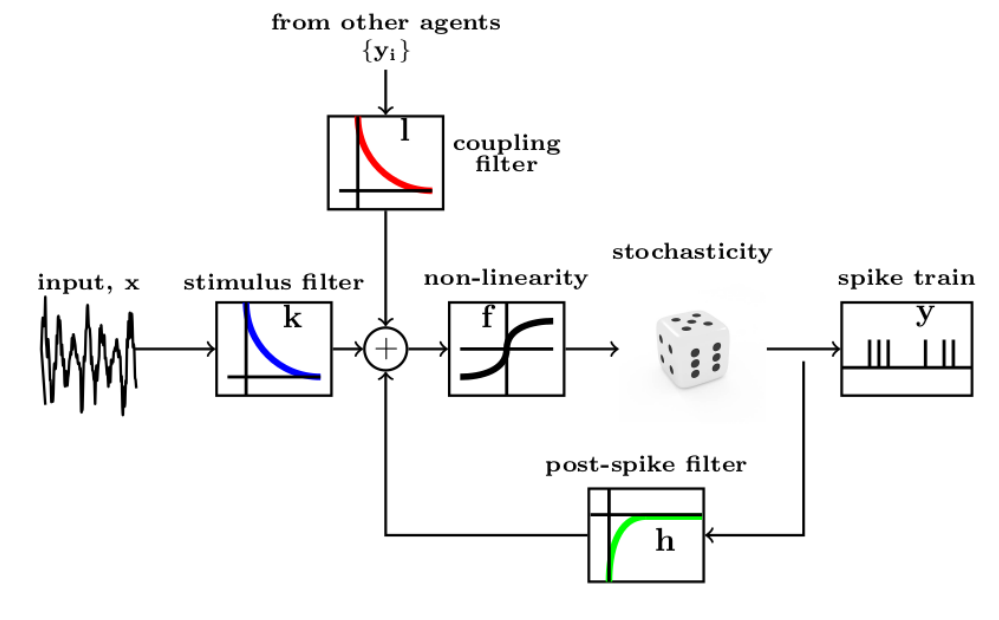

Neuroscientific theory suggests that dopaminergic neurons broadcast global reward prediction errors to large areas of the brain influencing the synaptic plasticity of the neurons in those regions. We build on this theory to propose a multi-agent learning framework with spiking neurons in the generalized linear model (GLM) formulation as agents, to solve reinforcement learning (RL) tasks. We show that a network of GLM spiking agents connected in a hierarchical fashion, where each spiking agent modulates its firing policy based on local information and a global prediction error, can learn complex action representations to solve RL tasks. We further show how leveraging principles of modularity and population coding inspired from the brain can help reduce variance in the learning updates making it a viable optimization technique.

(ECCV 2018) A Zero-Shot Framework for Sketch-based Image Retrieval [Paper] [Code]

Sasi Kiran Yelamarthi, Shiva Krishna Reddy, Ashish Mishra, Anurag Mittal

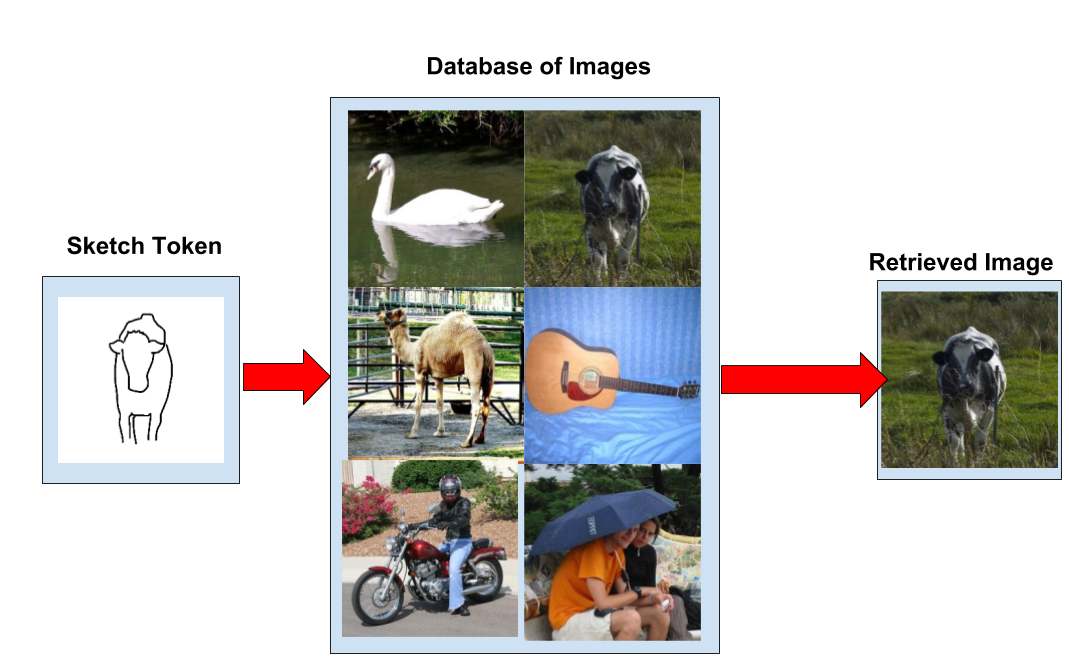

Sketch-based image retrieval (SBIR), similar to image search, involves retrieval of all the relevant images in the database given a user hand-drawn sketch as a query. Existing methods in SBIR fail to generalize to unseen classes of sketches. In this work, we propose a new benchmark for the zero-shot setting of SBIR and show that the existing methods, which are trained in a discriminative setting learn only class specific mappings and fail to generalize to the proposed zero-shot setting. To circumvent this, we propose a generative approach for the SBIR task by proposing deep conditional generative models which take the sketch as an input and fill the missing information stochastically.